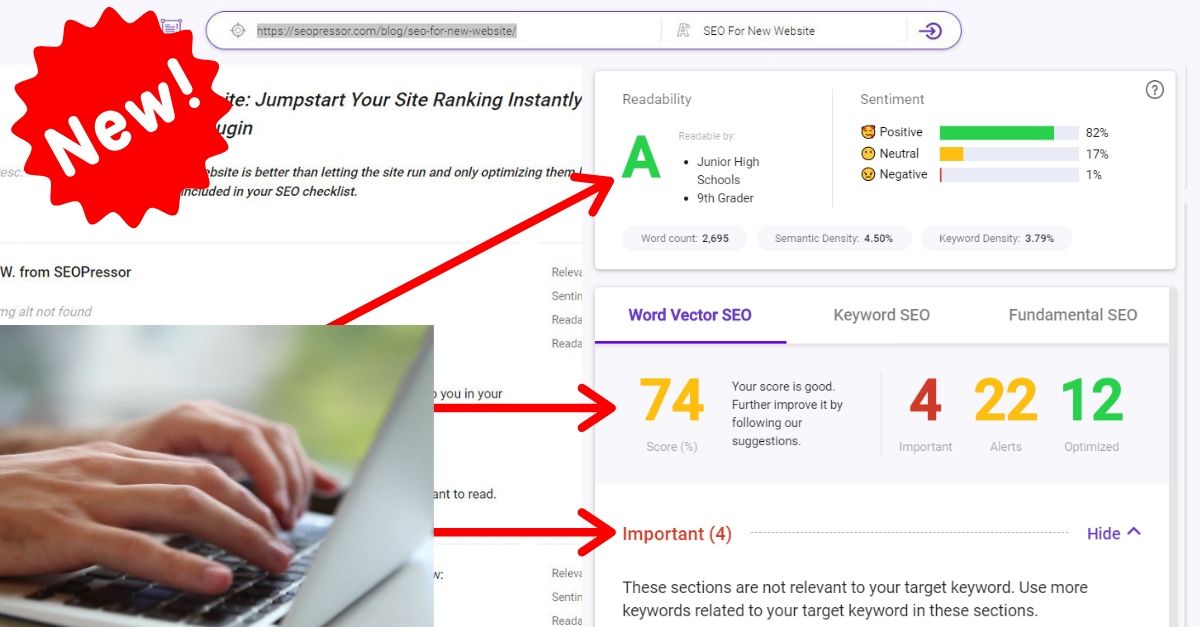

Steph W. from SEOPressor

...help you check your website and tell you exactly how to rank higher?

95

score %

SEO Score

Found us from search engine?

We rank high, you can too.

SEOPressor helps you to optimize your on-page SEO for higher & improved search ranking.

By jiathong on August 3, 2019

Welcome to the July SEO updates post, we’ll get you updated with the latest news in the SEO industry throughout the month.

You can check out our index for news labeled New! to find out what happened throughout the week or just go through our slide shows for a quick bite of what’s happening this week.

All SERP tracking tools are showing movements during 11, 12, 16 and 18th July which more likely than not, indicates that Google is tweaking something with their algorithms.

However, Google didn’t budge when asked for details. Very unlike how they pre-announced the June core update.

Webmasters reported that it seems that things have calmed down. If there’s something we can learn from Maverick, don’t wait for Google’s announcement.

Google will be spearheading to make Robots Exclusion Protocol a web standard

Google tweeted a series of tweets about the Robots Exclusion Protocol, also called Robots.txt, via their Google Webmasters account leading up to the announcement of their effort to officialize the protocol.

Today we're announcing that after 25 years of being a de-facto standard, we worked with Martijn Koster (@makuk66), webmasters, and other search engines to make the Robots Exclusion Protocol an official standard!https://t.co/Kcb9flvU0b

— Google Webmasters (@googlewmc) July 1, 2019

The effort was carried out by Google with Martijn Koster (the original author of the protocol), webmasters, and other search engines.

Following the announcement, Google also

1. Published a blog post discussing REP now (Read it here)

2. Updated their official developer’s document on REP (Read it here)

3. Released their robots.txt parser as open source (Read it here)

Google will drop support for unofficial Robots Exclusion Protocol directives starting September 1st

Google followed up with another blog post about unsupported rules in robots.txt here.

If you’ve been using your robots.txt to specify noindex, nofollow, or crawl-delay directive (which are all unofficial directives), you’ll have to find another way to make it work before September 1st, or things might look ugly.

That is especially true for those who are using noindex to hide away low-quality content from the search engine index. When September 1st comes, you might find a bunch of contents you intended to hide away from the SERP, being crawled and indexed, thus showing up at the result page instead.

If you’re currently using the noindex directive in your Robots.txt, Google suggested a couple of alternatives:

1. Noindex in robots meta tags

2. 404 and 410 HTTP status codes

3. Password protection

4. Disallow in robots.txt

5. Search Console Remove URL tool

This will only be affecting those who are trying to use noindex in their robots.txt file. So before you panic, be reminded that this will not be a problem if you’re using it in your HTML.

Just in case anyone is confused about the robots.txt announcement by Google yesterday, it is only a concern if you are trying to use noindex in your robots.txt file. (Most sites are not doing this.)

Noindex used in the HTML of your pages is not changing.

— Marie Haynes (@Marie_Haynes) July 2, 2019

Frédéric Dubut from Bing also chimed in and tweeted that Bing never supported any of those unofficial directives, so now is definitely the right time to fix this if you weren’t aware of this issue before.

The undocumented noindex directive never worked for @Bing so this will align behavior across the two engines. NOINDEX meta tag or HTTP header, 404/410 return codes are all fine ways to remove your content from @Bing. #SEO #TechnicalSEO https://t.co/ukKhfRPWzO

— Frédéric Dubut (@CoperniX) July 2, 2019

Now that the Robots Exclusion Protocol will be standardized, we’re positive that gray area practices such as these will be ironed out and webmasters will have an easier time controlling crawling behavior.

A number of SEOs have reported that their Google My Business listings were suspended after adding a short name to their profile.

Introduced in April, Short Names was a way of allowing businesses to create custom URLs for their Google My Business listings.

Now suspicions were raised that adding Short Names might be causing legitimate business listings to get suspended and removed from SERPs.

It appears that the rumors may be true – adding a @GoogleMyBiz shortname can cause your listing to be suspended???

I am working on a 100% legitimate business profile with 0 quality/spam issues – we just added a shortname and we are now suddenly suspended. Google, what gives? https://t.co/blKJMGpwee

— Lily Ray (@lilyraynyc) July 9, 2019

Not all businesses are getting suspended for adding short names, but it is a common theme among a series of seemingly random suspensions.

Google hasn’t confirmed if there’s a bug related to Google My Business short names, nor has it acknowledged that it’s even aware of this issue.

So far, all this evidence is anecdotal, and the consensus is that removing short names fixes the problem. Hence if you’ve recently had a Google My Business listing suspended after adding a short name, your best course of action is to remove it.

Someone recently tweeted John Mueller asking about slashes after URLs.

John answered saying that it is best to be consistent with your URL structure and either choose to use slashes after the URL or not to use slashes after the URL.

If possible, do not use both formats at the same time.

The best solution is to be consistent and only use one version of a URL. Link to that version, redirect to it, use it in sitemaps, use it for rel-canonical, etc.

— 🍌 John 🍌 (@JohnMu) July 11, 2019

On July 15th, 2019, Google announced that they will be removing the Google AdSense mobile apps (Android and iOS) from the application store by the end of 2019.

So… what’s next?

Google AdSense will be focusing all their resources on the mobile web interface. After 2019, you will need to open your browser in order to use Google AdSense. Do you think this is a good move?

Read the article on Google’s Blog here

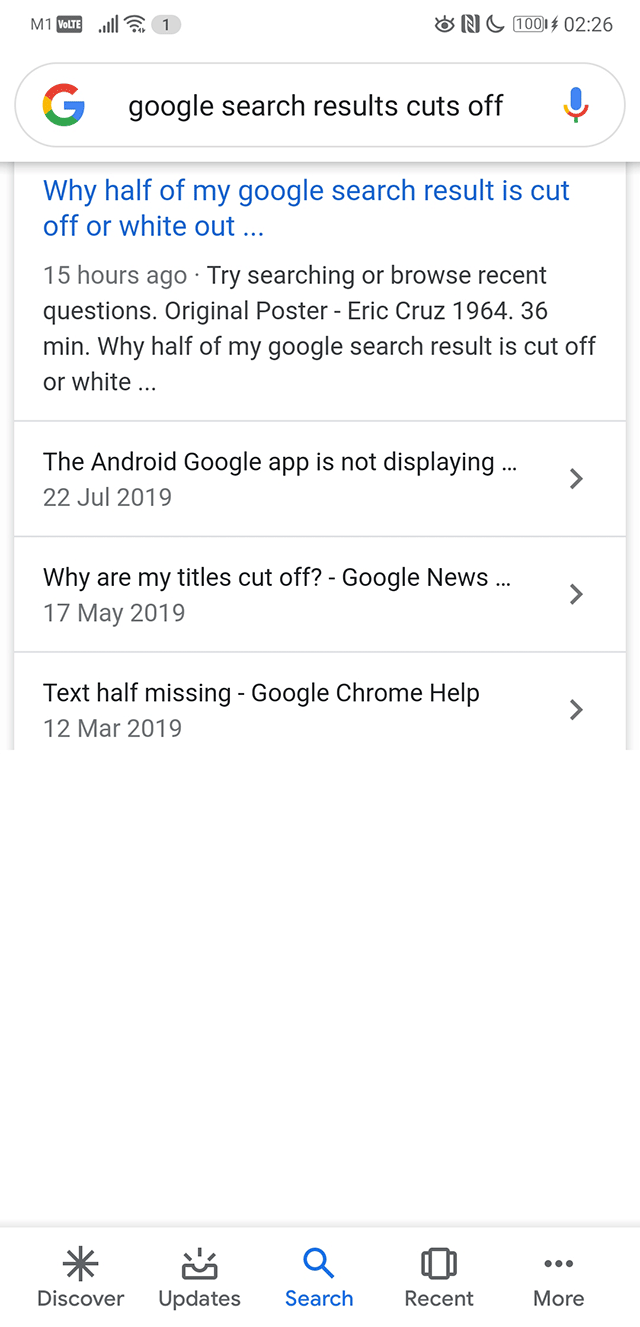

A lot of people has been complaining about Google search results not showing completely. It basically looks like your browser just stopped loading and leave half of the page blank.

(source)

That can’t be a great user experience.

Luckily Google is aware of the issue and reported that the bug is fixed earlier today. Are you still seeing the cut off search result page or everything’s all good again? We’d love to know!

This appears to have been fully resolved now.

— Google SearchLiaison (@searchliaison) July 25, 2019

Google Ads users, listen up!

Google has upgraded its Google Ads Editor from V1.0 to V1.1. Lots of changes have been made in this update. Google Ads Editor has added, updated and deprecated a few features.

The full list and brief description is as below:

An SEO tweeted about a sticky preview box in image search.

New @Google image loading split test is pretty cool. Not sure if seen before.

At first glance, the right-alignment was weird, but I’m already used to it. Loading speed is 2x as fast because no waiting for down-scrolling.@rustybrick pic.twitter.com/hCCT1dRGWO

— SEOwner (@tehseowner) July 2, 2019

Which we successfully replicated with our own search term.

That definitely makes it easier to navigate the image SERP. What about you? Let us know if you’re being served the sticky preview box or nah.

“Related search box” in image results is not really something new, but now more people are seeing it appearing more often with a frequency of one in every 15 to 20 images as you scroll through the image results in Google images.

While there’s no data on how often these boxes are clicked, but undeniably this may be a sign for us to pay attention to image optimization.

That's a serious amount of Related Search boxes on the Image SERP!

Has anyone ever seen this many? #SEO #Search pic.twitter.com/kRPKpQTkQK

— Mordy Oberstein (@MordyOberstein) July 11, 2019

Learn more about how to use SEOPressor to optimize your image.

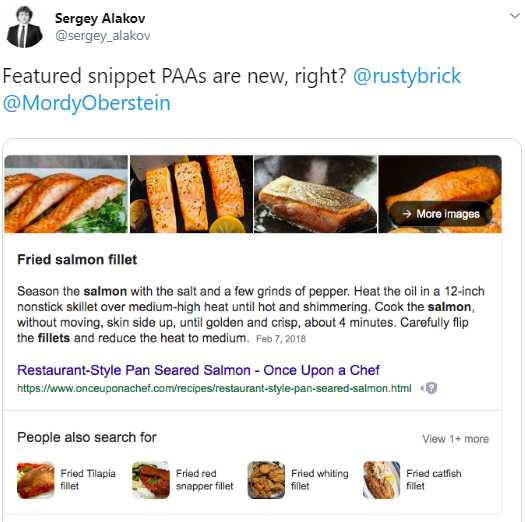

Twitter user @sergey_alakov shared a picture of a featured snippet and underneath it, there is “people also search for” which is rather new. We have never seen it on the SERP before, and we don’t see it now too.

See the picture for yourself; are you able to replicate it? Here’s the tweet from @sergey_alakov:

Are you able to see this on your SERP?

We think this feature will actually enhance the user experience, allowing the users to navigate and explore easily (on Google). What do you think of this feature?

If you’ve been following AMP, you’ll know that AMP pages are often time given quite some edge in Google’s SERP.

And they’re getting more powerful with this new SERP features on Google Image.

When users click on an image result, they will be shown a preview of the website’s header, which is only available via AMP. Now when the user swipes up on the preview, they’ll be brought to the web page immediately.

A neat trick to bring in more visits.

A few days ago, Danny Sullivan of Google wrote a post on Google Webmaster Central Blog. The topic is “What webmasters should know about Google’s “core updates””.

No, it's not a new core update! It is, however, a new blog post on what webmasters should know about Google’s core updates: https://t.co/e5ZQUA3RC6

— Google SearchLiaison (@searchliaison) August 1, 2019

In that post, Danny Sullivan has shared a lot of information about the Search Engine Results Page (SERP). First of all, it is stated that Google releases one or more changes each day to improve the search results.

Next, Google aims to confirm SERP updates that are more noticable and updates where they feel webmasters are able to take actions.

Google confirms broad core updates because sites may notice drops or gains. Google knows that webmasters will look for ways to fix it. Google does not want webmasters to change the wrong thing and has indicated that sometimes, there is nothing to fix.

So… if your page is not performing well in a core update, do not worry. Your page has not violated Google webmaster guideline. But, Google just thinks that there are other pages that do better, hence the changes.

An example given in the post is:

It was suggested that if webmasters really want to do something after experiencing the changes, they should focus on providing the best content. It is what Google’s algorithms seek to reward.

To self-assess whether you’re offering quality content, Google has provided updated advice with a set of questions to ask yourself about your content. You can find them on the post: What webmasters should know about Google’s “core updates”

Last but not least, Google said that the core updates may also affect Google Discover.

I believe that the post has clarified many things. So remember webmasters, always provide the best content you can.

Earlier, on 10th July 2019, we reported that there were a few people facing missing GMB listings after adding shortnames.

A few days later, Google told @segineland that the missing business listing is not related to shortnames but it is a technical issue instead.

Google My Business Listings issue was finally fixed on the 16th June 2019. However, some has said that the issue was not fixed. Were your GMB listings and reviews affected? View thread here.

@rustybrick shared on Twitter that Google may be testing a “share” and other features on search result. Google’s @dannysullivan later confirmed that it is a test.

What’s your take on this? With this buttons on the search engine result page, we think it allows user to take action much quicker and simpler.

If you’re an avid user of the Chrome Developer Tools, there’s good news! Addy Osmani from the Chrome team announced that Chrome can now easier recognize the incomplete CSS properties that you’re typing. Handy if you can’t recall the full syntax for stuff like gradient, transforms, filters and more.

.@ChromeDevTools now has better autocomplete values for some CSS properties. Helpful if you can't remember the full syntax for gradients, transforms, filters etc. pic.twitter.com/LRk89gYVRP

— Addy Osmani (@addyosmani) July 5, 2019

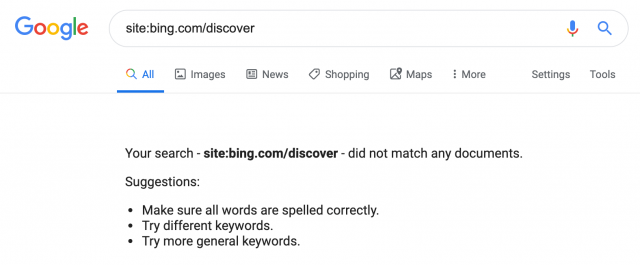

Recently the SEO community has been buzzing about how Google seems to have removed Bing’s entire Discover section from Google search results index.

This was discovered when Edd Wilson tweeted about it on July 4, and several sites have picked it up.

@matthewbarby Do you know what happened to Bing's discover section? Organic traffic seems to have just fallen off. I understand there's a redirect on the main discover section but all subjects are still hosted under there and are no longer indexed… pic.twitter.com/d7zZPdXJfW

— Edd Wilson (@EddJTW) July 4, 2019

The thing with Bing Discover is that prior to this incident, it has been getting millions of organic traffic from Google search.

So either Google decided this was a bad search experience and dropped the pages or something else is going on. Find out what’s going on and more news in our slides above.

Search Engine Land’s Barry Schwartz reported his findings on Bing Discover’s dissapeanrance from Google’s SERP, and it all boils down to one sentence in Google’s webmaster guideline,

“Use the robots.txt file on your web server to manage your crawling budget by preventing crawling of infinite spaces such as search result pages.”

Bing Discover IS a search result, and Google does not want search results in their search result. Welp, isn’t that a mouthful.

Barry’s not sure what prompted Google to drop Bing Discover all of a sudden, probably they just caught on, or they think enough is enough.

Google declined to comment on this subject, guess that’ll be another mistery.

On 25th July 2019, Google launched a video series called #AskGoogleWebmasters. The video series is led by Google’s Webmaster Trends Analyst, John Mueller.

We think that this is a pretty good initiative. Searching for a video on YouTube is much a simpler task as compared to scrolling through Google Webmasters’ Twitter feed.

The first video was released on 26th July 2019 and it’s about whether outbound link helps or hurt SEO.

John Mueller did not specifically answer the question. But here’s what he said:

“Linking to other website is a great way to provide value to your users.”

We all know that Google’s priority is to always provide great user experience and values to users, so what do you think? Does it help SEO?

There are some links to watch out as mentioned in the video. They are arranged links, advertisements and linking in comments. For these kinds of link, it is advised to use rel=”nofollow”

Ultimately, what webmasters should do is to link out naturally and provide values to their users.

We’re looking forward to more videos by Google Webmasters. If you would like to have your questions answered by John Mueller, remember to include #AskGoogleWebmasters in your tweet!

[For information] For those of you who had trouble searching for Google Quality Raters Guidelines the past few days, here’s why.

Google has decided to move them to another URL. If you type in the old URL now, you should be redirected to the new URL.

Here’s the new URL in case you want to take a look at the updated version: Search Quality Rating Guidelines.

Frédéric Dubut, Bing’s Web Ranking & Quality Program Manager, asked the public for recommendations on improving their Webmasters Guidelines.

SEO friends! 👋 I'm kicking off an effort to refresh our @Bing webmaster guidelines, both the spirit and the letter. Any shady tactics you think are not penalized enough? Any feedback on the document itself? https://t.co/Md2iZECrjQ

— Frédéric Dubut (@CoperniX) July 30, 2019

It seems like Bing are trying really hard to improve. Here is their current Webmasters Guideline: Bing webmaster help & how-to

If you have any thoughts on it, share them to Frédéric Dubut on Twitter : @CoperniX

Updated: 6 May 2026

Save thousands of dollars (it’s 100x cheaper)

Zero risk of Google penalty (it’s Google-approved)

Boost your rankings (proven by case studies)

Rank High With This Link Strategy

Precise, Simplified, Fast Internal Linking.