1

Steph W. from SEOPressor

👋 Hey there! Would you like to try out this New AI-Powered App that'll...

...help you check your website and tell you exactly how to rank higher?

91

score %

SEO Score

Found us from search engine?

We rank high, you can too.

SEOPressor helps you to optimize your on-page SEO for higher & improved search ranking.

On-Page SEO is DEAD!

Seeing such headline in Inbound.org the other day made me almost spit my coffee.

For one, “X is dead” kind of title is the kind I expect least to show up in Inbound’s Trending feed given how exaggerating such title usually are.

Secondly, to boldly claim that on-page SEO is dead to a community that made out of mostly marketers who swears by SEO is practically suicide.

Went through it and turn out it’s more towards “Exact match keyword is DEAD” rather than on-page SEO.

But the article brought a lot of data to the table, as well as some very good points – old-school on-page SEO has started to affect rankings less and less.

And that made me realize, as THE on-page SEO specialist, we don’t actually write a lot about on-page SEO. So I figure it’s a good opportunity to write one.

To be clear, NO. On-page SEO is NOT dead nor dying. But of course, sophistication in Google made some practices obsolete. This however, brought forth room for new optimization options.

So I thought it’s a good idea to compile a list of on-page SEO practices in 2017. New and old. What works and what doesn’t. I’ve come up with 29 items on the list so far.

Let’s have a go at it:

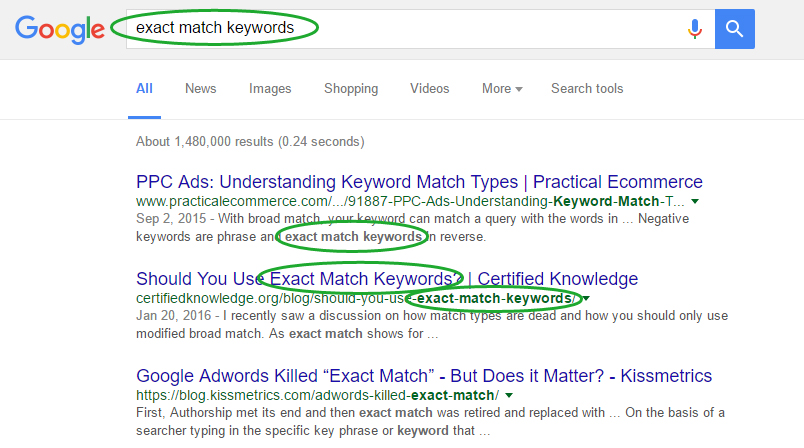

Using exact match keywords means fitting the target keyword exactly as it is in your content.

A lot of points in the “SEO is dead” article I mentioned actually said that based on analyzing how much usage of exact match keyword correlates with high ranking.

His research shows that using exact match keywords in on-page SEO (title, heading, meta description etc) doesn’t corellate with high ranking at all.

In fact there are some search results that doesn’t contain exact match keywords in the title at all.

Exact match keywords used to be the main way how Google match results with search queries.

But for quite a long while already, the practice of stuffing keyword is already obsolete. This is especially given the efforts by Google to eliminate keyword spamming.

Algorithms like the EMD and Panda update ensures that if all that you have is same exact keywords repeated in your content, it won’t make it in the top ranks.

With that being said, I’m not saying using exact match keywords is totally bad. It’s just won’t help that much. Just remember to use them naturally and not forcing them and everything’s good.

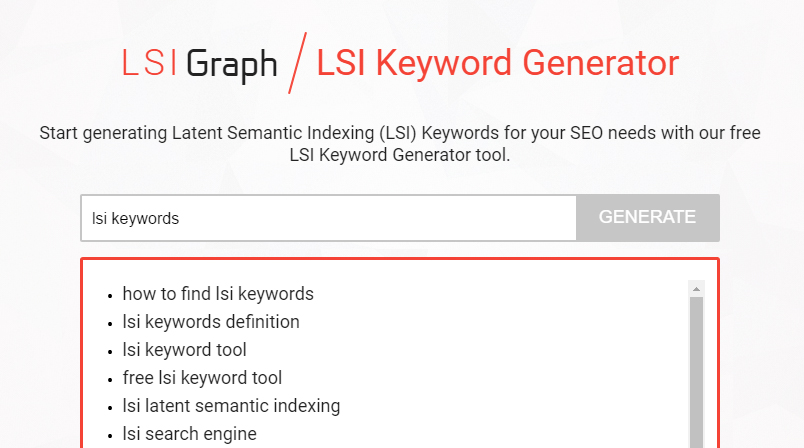

LSI Keywords are semantically related keywords often found in the same content accompanying the target keyword.

As an improvement over simply matching keywords, Google now seeks to understand the theme of every content in order to know what’s it about.

Simply matching keywords won’t get them far in most cases since a keyword can have different meanings over different context.

So what Google does is to look for LSI keywords – keywords semantically related to the main keyword. LSI keywords can let Google make an accurate guess on what’s your content about.

LSI can include synonyms of the target keyword. It can also include the keywords related to the theme of your content.

For example, when talking about “Bears”, including LSI keywords such as “diet”, “habits” or “largest” gives a stronger signal to Google that you’re talking about a grizzly bear.

Include keywords such as “mock draft”, “rumors” or “free agent” and Google will know you mean the Chicago Bears.

While it does sound hard at first, LSI keywords is not as complicated as it seems.

Do include LSI keywords when doing your keyword research. Knowing the right LSI keyword to include in your contents will be extremely helpful to help your ranking.

You can find the right LSI keywords surrounding your target keywords by using tools such as LSIGraph.

SEOPressor Connect have also incorporated LSIGraph in our plugin for a seamless LSI keyword addition while creating your content.

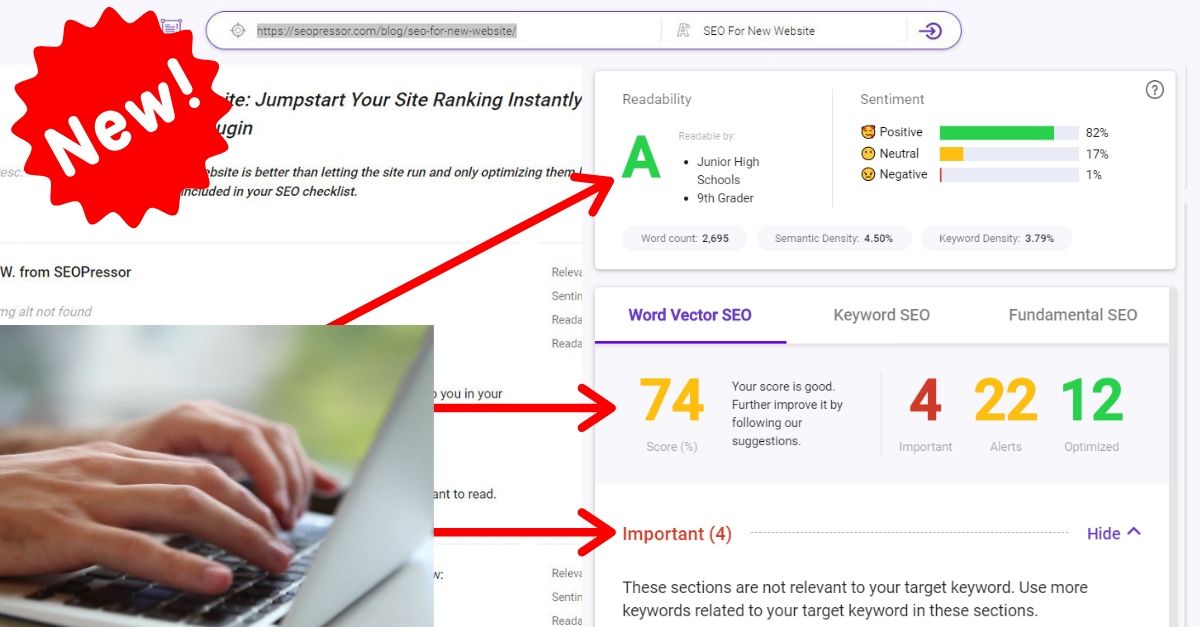

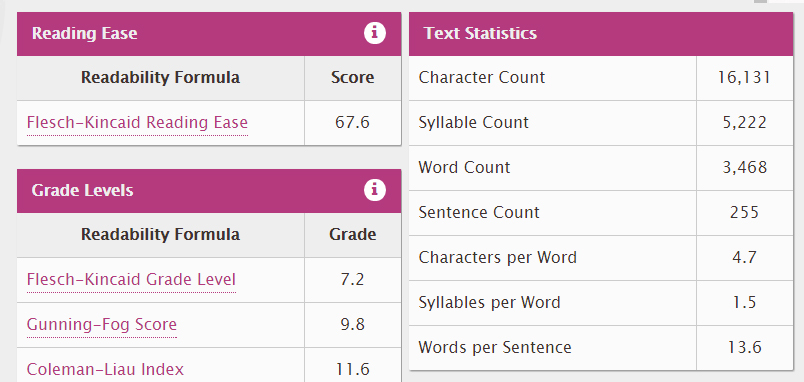

Readability score rates how easy to read your content is. The easier it is to read, the better to the reader.

Readability means how easy or how hard it is for readers to understand your content. And believe it or not, the readability of your contents can actually be measured.

One of my favorite example is the Flesch–Kincaid readability test. Flesch–Kincaid take into consideration factors such as total number of words, sentences and syllables.

The test then generates a range of score as the following:

Basically you’d want to get your score at least in the 70 to 80 range as that’s where most of your target customers will be. This can be quite a challenge especially for marketers who deals with highly technical stuff.

Relaying a complex information in a form understandable by the majority of the audience is not an easy task. But it’s a very rewarding skill to master.

It’s also a testament to someone’s understanding of the subject. As the famous quote goes “If you can’t explain it simply, you don’t understand it well enough.”.

While improving readability have an obvious benefit to increasing your readership, it might also help Google in understanding your contents better.

With RankBrain and all the focus on semantic web, Google have long since moved on over blindly matching keywords.

Google have started to semantically and thematically understand content. And if even humans have difficulties understanding them, how do you think algorithms and artificial intelligence will fare?

As a rule of thumb, use the KISS Principle – Keep It Simple and Straightforward.

Things you can do to increase readability:

You can use some tools available online on websites such as Readability-Score or The Writer.

If you’re using SEOPressor Connect then you don’t have to worry as we have incorporated a readability score in your SEO Scoring. *wink wink*

We’ll even suggest improvements you can do to instantly improve readability.

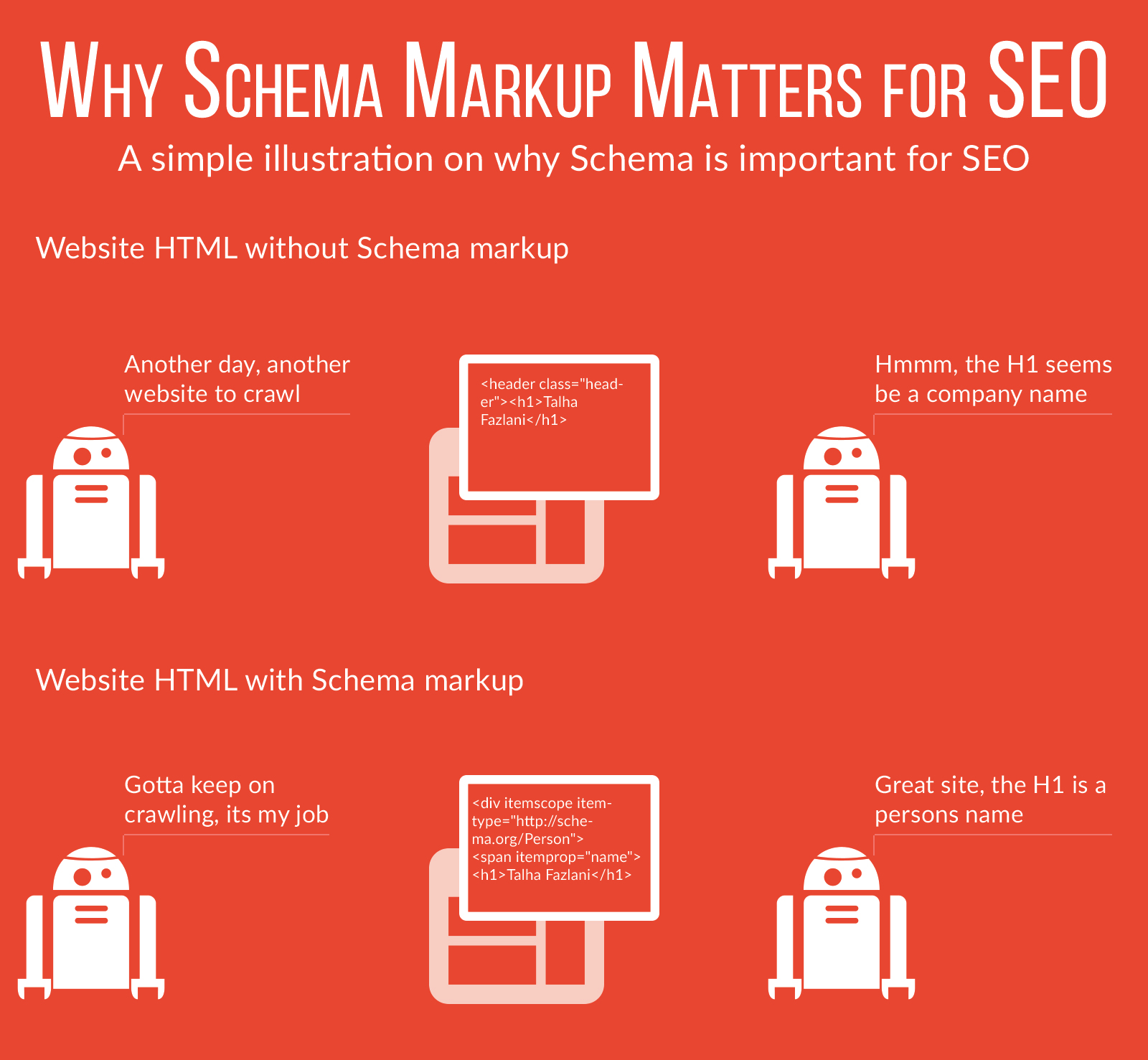

Markups describes your contents in a way that is understandable by machine readers.

I have previously mentioned about “semantic web”. What’s that all about?

Basically it’s the aim to facilitate online contents to be understandable to machine readers as well as humans can.

I’ve written these 2 articles for better understanding on the subject:

Long story short, one of the way of achieving this is by tagging or marking up your contents with codes that describes the content in a machine-friendly way.

There are a number of markup language standards but the two most important ones are:

You just add them in your normal code and they will provide additional information to search engines that describes your content.

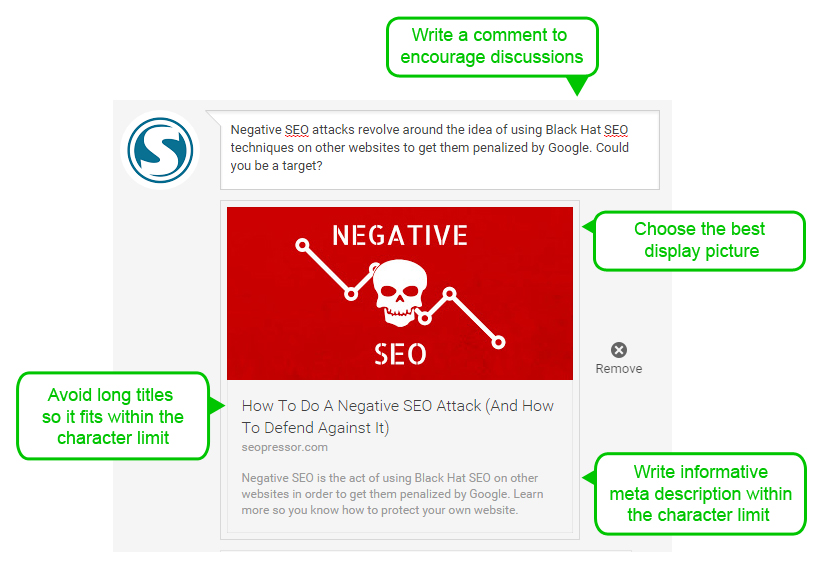

Using social markups like Facebook OpenGraph provide you with more control how your links appear when shared.

Another additional improvement that can be done on-page is by including social markups. For now there are two social markups available:

Now what do they do? Similar to the markups mentioned previously, they describe contents to machine readers – specifically Facebook and Twitter respectively.

But the purpose they serve is a bit different. Instead of improving your ranking, social markup improves the appearance of the link when people share your contents on said platforms.

Simply put, by using social markups, you will have more control over what is displayed on your links including:

Improving the appearance of your links on social media will increase click-through-rates as well as sharing rates.

Making your website look good on mobile devices can improve your ranking on mobile searches.

Mobile-Friendly algorithm was a huge topic not long ago. What it does is serve a modified search results on mobile devices.

Compared to desktop searches, searches made in mobile devices now favor results that is optimized for mobile viewing.

This is kind of a big deal as the amount of mobile searches are shooting up and might soon leave desktop search in the dust.

A mobile-friendly website should at least:

You can use Google’s own tool to check whether your website is mobile-friendly:

https://testmysite.thinkwithgoogle.com/

For tips on how to make your website mobile- friendly, check out these articles:

Longer contents are shown to rank better than short ones.

This one seems simple but it’s not. Basically researches done by many parties shows that higher ranking results seems to be longer than lower ranking results.

But that doesn’t mean you can just stuff your content with fillers just to inflate the wordcount.

The long content tends to rank higher because they contain more information and value.

So include the most that is related to the topic, but don’t purposely make your content long for the sake of making it long.

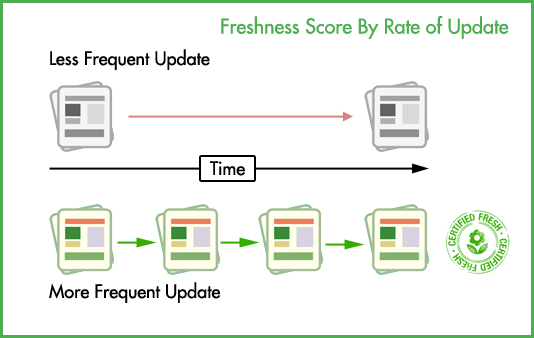

Freshness score takes into consideration how new and updated your contents are.

To put it simply, Freshness is a score that is related to how recent and updated your contents and website are.

Freshness algorithm aims to deliver the most up-to-date results for queries that requires up-to-date answers (also known as QDF – Query Deserves Freshness).

Things you can do to keep your website’s Freshness score higher includes:

You can learn more about Freshness score here:

Everything You Need To Know About Google Freshness Algorithm

Optimizing your URL includes keeping a low character count while still retain necessary keywords, while being descriptive at the same time.

Your website URL is one of the easiest aspect you can optimize.

Rather than being random strings or numbers, make sure that your URL:

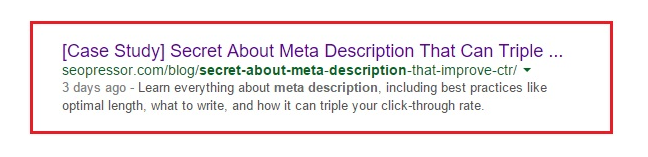

Your meta description might be picked up by search engines to be displayed as search snippet. This can help describe your content better and increase click-through-rate.

Meta description is a piece of code you include in your webpages to describe its contents.

It looks something like this:

<meta name="description" content="Hi! I'm a meta description!"/>Meta description is important as it might be used as the snippet in your search result.

Having a good meta description can help improve your click-through-rate and indirectly improve your ranking.

Here’s how you should create your meta description:

For further reading on optimizing your meta description:

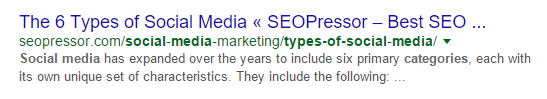

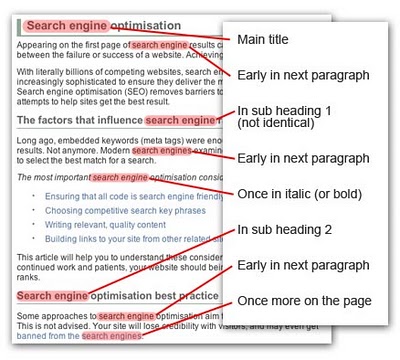

Optimizing your title includes keeping it short, descriptive and containing the target/LSI keywords.

The study by Ahref shows no correlation between having exact match keyword in the title and the ranking. Does this means that optimizing titles have no impact on ranking?

Not exactly.

Again, optimization evolves over time. If you think that optimizing titles only involves including the exact match keywords in it, then you’re a bit behind.

Optimizing titles now can be done using partial match (or separated) keywords as well as LSI keywords.

And I can agree with Tim from Ahref when he said that title optimization is not a major ranking factor anymore.

Which makes total sense given how many speculated ranking factors are there and how tiny the impact and effort of this particular component.

That being said it’s not like we should stop optimizing our titles. It just means we have the flexibility to focus more on crafting human-centric, catchy title rather than “technically correct” ones.

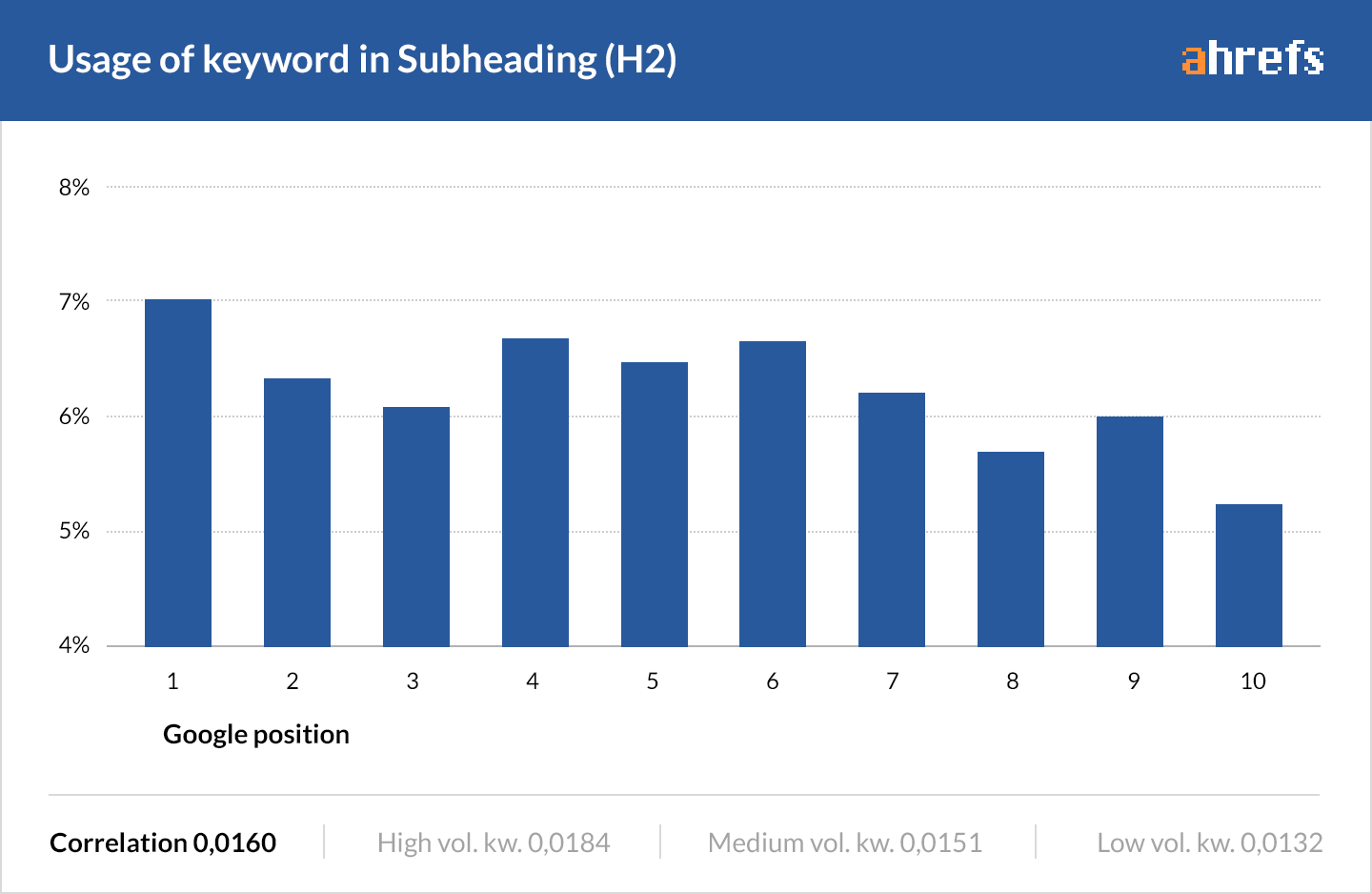

Exact match keywords in headings won’t help you rank. LSI keywords however, might.

Similar to title, research have also shown that there’s no relation between having exact match keywords in the headers and ranking.

And the conclusions are also similar – craft a helpful and meaningful headers to properly label what the sections are about.

Exact match keywords can be helpful most of the time but isn’t mandatory. Including LSI keywords and synonyms can help make headers more descriptive and meaningful.

Decorating your keywords both highlight them to readers and search engines alike.

Keyword decoration or also known as keyword emphasis, relates to the practice of bolding, italicizing or underlining your keywords.

This is aimed to help both human readers and also search engines, SEO-wise.

Human readers tend to first scan through texts to get a general idea about it before actually reading them.

Decorated or emphasized keywords will help them make mental note to understand the essense of a coontent.

Search engine crawlers crawl through the codes of your websites instead of reading them like human does.

They don’t actually understand contents like us, but try to find whatever clue available to understand the main point of any content.

Code-wise, keyword decoration will add emphasis tags (bold tag, italic tag etc) to the keyword and it will be among the “clues” picked up by search engine crawlers.

By knowing that your keyword is the focus of your content, search engines will be able to match your content better to queries related to those keywords.

As you might think, keyword decoration alone is not a game breaking factor in ranking. But the direct and indirect effect of it will sure contribute to your overall ranking score.

What’s this about? It’s simply using HTML codes to format your contents in either ordered (numerical) or unordered (bullet points) list.

Similar to keyword decoration, clumping together related chunk of information will help both human readers and search engine know that a group of information belongs in the same category.

It’s massively pleasing to the eyes of a human reader. While code-wise, it’s neat and organized enough to be digested by search engine spiders.

I’ve even found a Google search engine patent that highly suggest that Google is actually implementing this.

Micro and Macro Conversion are the ultimate goals a website should achieve.

How well your website converts your visitors are also an important sign used by Google to assess the quality of a website.

According to Google, conversion can be classified into two categories:

Best way to do this is to use Google Analytics to set up and track your website goals. This also means you should make your content as dynamic and interactive as possible.

Other than improving user experience, it can also improve your ranking by creating more trackable activity on your website.

Conversion methods you should add includes:

Macro conversion

Micro Conversion

There are patents that suggests that Google is also tracking the rate of a website getting bookmarked or favorited and how much user-generated data (cookies, temp files) a website is able to influence.

Placing your target keywords in strategic places like the beginning and end part of your content let Google know that it’s the focus of your content.

The Ahref research shows that 80% of the time, top ranking results have their keyword found in the first 100 words.

This enforces the practice of including your keyword in the introduction part of your content.

It is also a good idea to include your keywords at the end/conclusion part of your content. A patent shows that when creating a search snippet, Google tends to pick the beginning and ending part that contains the keyword.

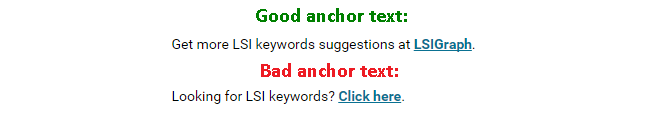

Make sure you optimize your anchor text to reflect the link it leads to.

Another way search engine analyze the main focus and theme your content is revolved upon is by looking at the anchor text of the links in your content. This include both internal and external links.

So a key takeaway is when linking parts of your content, make sure to link parts that include your main and LSI keywords.

Avoid non-contextual anchor text such as “Click here” or “Read more”.

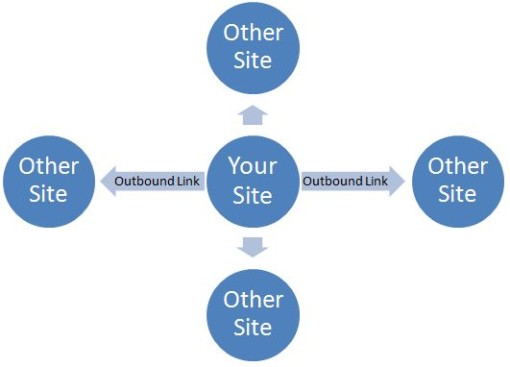

Where your links are pointing to are also clues search engines picks up to understand the focus of your website. Be sure to keep them thematically consistent.

Links are always a huge topic in SEO as Google values incoming links pointing to a website as a sign of quality.

On the other hand if a website is linking out too much it can potentially drive away traffic out of your website as you are practically putting “exit” doors everywhere.

But search engines also assess the theme of your contents by the destination where your links points out to.

So manage your outbound links and limit them only on parts that’s thematically related to the main focus of your contents.

The destination where your links are pointing to should also be taken into consideration. Relevant and high quality websites should be the focus.

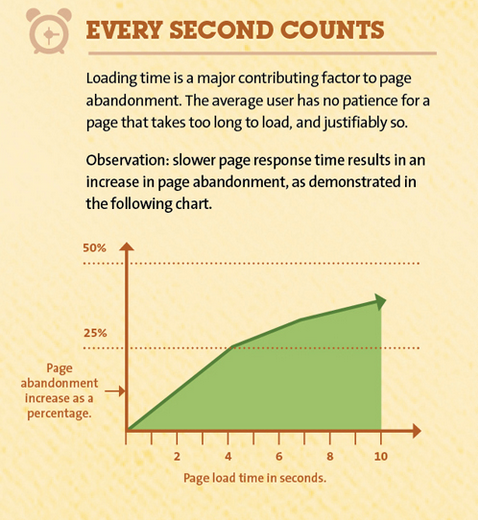

The longer they wait, the higher the chance they’ll leave.

The research by Ahref shows that most top ranking result loads in 0.7 seconds or less.

They have concluded that the correlation of page load time and ranking is small at best.

My opinion is that search engines might not rank results based on which site loads faster, but website that loads slower than a threshold value might get their ranking score knock off a bit.

In another word, you’re good as long as you don’t put the user into a “waiting” mode.

Having a comment section will keep your visitors engaged even after they’ve read the contents.

One of the on-page seo feature you should have in your website is a (preferably active) comment section.

A comment section can contribute so much to your on-page SEO:

Not to mention value to readers:

Although great care should be taken on moderating the comment section. Some tips:

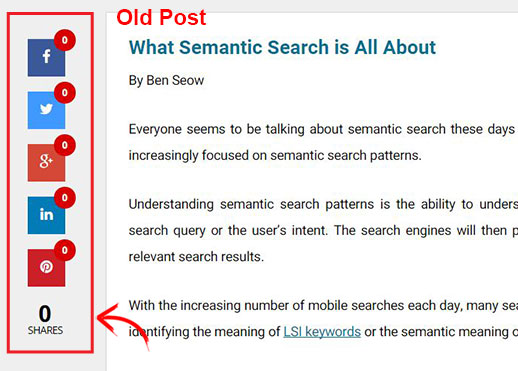

Making it easy for people to share your content on social media is important to bring in traffic.

Social shares does not count as a direct ranking factor. This is because most of the time, links from social media sites are usually nofollow links that doesn’t directly influence ranking.

But that being said, social shares, nofollow or not, can bring in tons of traffic and exposure. Now those will definitely improve your ranking.

So it’s essential to make it easy for your readers to share your contents.

A lot of widgets are available in the market you can include to boost social sharing:

Social count can give assurance of a good content for the readers. Just compare between this:

And this:

No previous shares might make readers think twice to share even if they might like the content.

A content that has been up for months but have less than 10 social shares doesn’t have a good ring to it.

Even if a reader might like it, they will hesitate to share an unpopular piece. Worse, they might even question their initial judgement!

Social count can also be used as a metric to genuinely assess the interest in any content piece.

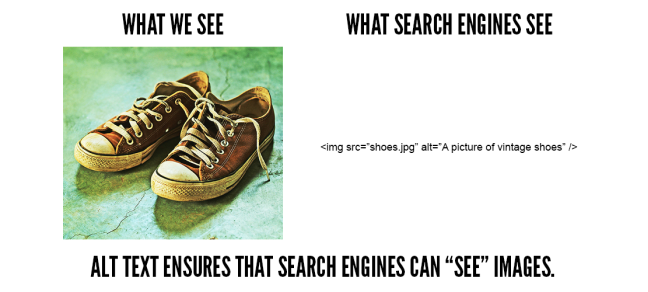

Alt tag help describe an image to search engine crawlers.

Remember when I mentioned about search engine crawlers earlier? Unlike human readers, they are also lacking a critical feature to properly scan through website contents – a pair of good eyes!

This means that those poor spiders are blind to any images you include in your contents. They know that the images are there but they don’t know what it shows.

So what can you do with your image to improve your on-page SEO?

Robots.txt instructs search crawlers on where they should and shouldn’t go. This will allow search engines to view only the best side of your site.

Search engine crawlers will probe your website and index whatever they find. There are times when you don’t actually want crawlers to do that.

For example, when you first create a website and haven’t optimized everything yet, you might want to hold search engines to crawl and index your unprepared website.

Once a website is crawled and indexed it will be scored and recorded. This might affect future ranking score and we don’t want that.

Other reasons why you want to control crawler behaviour:

So how do we manage them?

We can politely ask the crawlers by using robot.txt. Robot txt a text file used to establish a rule for visiting crawlers. Things you can do with robot txt includes:

Robot txt is still relevant and useful today. While it’s not a ranking factor per se you need it to send crawlers to only desired part of your website (and hiding the bad ones).

Broken links disrupts reader’s activity by sending them to a dead end. Managing broken links will help improve your website’s user experience.

The Ahref research shows that only 2% of top 10 Google search results contain broken links. It’s close to none, thus showing that there are no hard rule saying a website can’t rank at all with a broken link.

But still, the tolerance level is miniscule.

That being said I believe broken links have more to do with bad user experience that indirectly affect the search ranking.

Hitting a broken or a 404 page is heavily annoying and disruptive to visitors browsing your side. It’s akin to hitting a wall and most of the time users forget what were they doing before hitting the dead end.

This leads to them to drop reading altogether, resulting in high bounce rate and low time on page.

Managing and detecting broken links are crucial to user experience and trust in your website.

Google takes internet security seriously. By securing your website with HTTPS, you made your website safer both in effect and in the eyes of Google.

Using strong secured HTTPS encryption is one of the rarer on-page SEO factors that is actually confirmed by Google.

Making the internet a more secure place is a vision Google take very seriously. So it’s no surprise that HTTPS is used and announced as a ranking signal.

Although HTTPS might not make or break your website ranking, remember that SEO involves practicing as much optimization as possible to squeeze every drop out of your website.

Implementing HTTPS on your site can be done through these 5 simple steps:

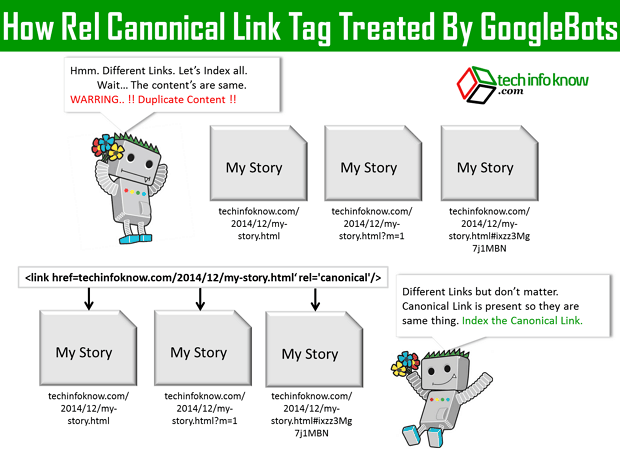

Canonical link ensures your link juice goes where you want them to.

A bit more advanced concept, canonicalization is basically an answer to the issue of duplicate contents.

You wrote one great article with the title “How Pokemon GO Can Improve Local SEO” and you post it in your company’s website.

But at the same time you also want to post a copy in your personal portfolio website. Now you have duplicated content across 2 domains.

Google will have to pick up only 1 of these 2 to be ranked as including both won’t help searchers.

Without canonicalization Google might decide for themselves which one to pick and it might not be the version you prefer.

To avoid this, we add canonical tags in the duplicate content(s), pointing to the main content.

This tag will be read by search crawlers and it will tell them, “Hey this is a duplicate content. The original is

You can read more on canonicalization here: https://seopressor.com/blog/canonical-links-and-canonicalization/

XML sitemap tell search engines the complete hierarchy of your webpages. This will ensure all pages are crawled.

XML sitemap is a powerful on-page SEO tool to let search engines know the structure of your website and if there’s any changes made automatically.

Without sitemap, search engines need to rely on links pointing to the parts of your website in order to crawl and index them. This way, they might miss some parts of your site.

This can be avoided in two simple steps:

Generating XML sitemap can be done using manual coding or various generator tools.

Using 301 redirect helps you keep your previous ranking score of your page when you replace them.

When you’re moving a webpage from one URL to another for whatever reason, you need to redirect the previous URL to the new one.

Yes, you may also simply remove the original page and simply replace them with the new one. But if you do this, the new page’s ranking score will be reset, ignoring the original score.

Using a 301 Redirect will let search engines know that it is a new version of a content. This will allow search engines to preserve the page ranking for the redirected content.

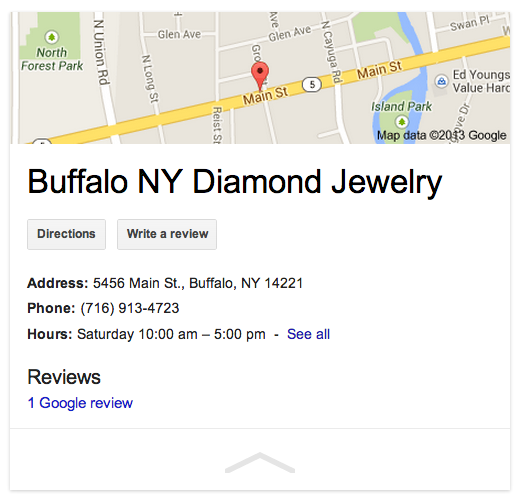

Properly setting up your local SEO helps your business be more visible on local searches, attracting customers to your location.

Local SEO is crucial if you depend a lot on getting business from local visitors. Getting recognized as a business operating on a particular area can give you edge on showing up on local searches.

The basics of on-page local SEO is having your local business information present and consistent in your website. Required information includes:

Combined with off-page local SEO factors will ensure that your business will be featured on local SEO card as well as ranking in local search placements.

So that’s the current compilation of on-page SEO practices still working today. As you can see, a lot of things remains the same while some evolves to become more complicated.

One trend that we are seeing is that SEO practices have started to become less black-and-white technical practices. The new on-page SEO factors tends to move towards what helps the user experience rather than simply checking boxes.

On the surface this makes things easier as we are allowed a more flexible uses of keywords. But deep down, the scoring just become more sophisticated, including complex items like readability and content theme.

I personally think that doing the technical stuff is a lot of hassle but at the same time, they still exist and they still contribute.

I find that automating the bulk of the optimization process allows us writers to focus on the thing that matter – creating great contents.

[bof_display_offer id=18256]